LogitBoost

In machine learning and computational learning theory, LogitBoost is a boosting algorithm formulated by Jerome Friedman, Trevor Hastie, and Robert Tibshirani. The original paper[1] casts the AdaBoost algorithm into a statistical framework. Specifically, if one considers AdaBoost as a generalized additive model and then applies the cost functional of logistic regression, one can derive the LogitBoost algorithm.

Minimizing the LogitBoost cost function

LogitBoost can be seen as a convex optimization. Specifically, given that we seek an additive model of the form

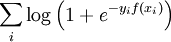

the LogitBoost algorithm minimizes the logistic loss:

References

- ↑ Jerome Friedman, Trevor Hastie and Robert Tibshirani. Additive logistic regression: a statistical view of boosting. Annals of Statistics 28(2), 2000. 337–407. http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.51.9525

See also

This article is issued from Wikipedia - version of the 12/4/2014. The text is available under the Creative Commons Attribution/Share Alike but additional terms may apply for the media files.